Turkey's Healthcare Sector Through the Eyes of AI 2026

Turkey's Healthcare Sector

Through the Eyes of AI

As patients turn to Large Language Models (LLMs) for their next-generation "find a doctor and hospital" experience, we analyze Healthcare Group A's AI visibility, reputation risks, and dangerous AI hallucinations.

1. Executive Summary

Healthcare is one of the industries experiencing the sharpest transition from traditional Search Engine Optimization (SEO) to Generative Engine Optimization (GEO). Today's patients don't just search for "Istanbul oncology doctors" — they prompt ChatGPT or Perplexity with complex queries like "My mother has been diagnosed with stage 2 breast cancer. Compare Hospital A and Hospital B in Istanbul in terms of technology infrastructure and patient satisfaction."

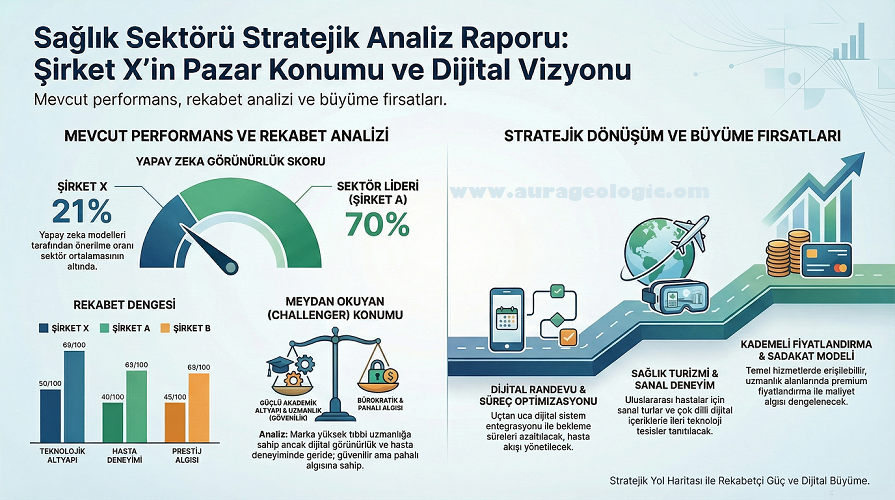

This research report analyzes the Share of Mind of Healthcare Group A, one of Turkey's leading healthcare institutions, across AI (LLM) assistants. AURA GeoLogic findings reveal that despite the hospital's massive physical and academic infrastructure (JCI Accreditation, COVID-19 vaccine research, university-hospital complex), its digital LLM visibility is dangerously weak.

Critical Findings (Risk Alerts):

- Visibility Crisis (21/100): Despite high brand awareness, LLMs fail to organically recommend Institution A compared to competitors in generic medical queries.

- Reputation & Billing Disputes: While "quality" is emphasized in AI datasets, medical tourism practices and billing disputes drag the sentiment score (11/100) to rock bottom.

- Absurd Hallucination Findings: AI's uncontrolled data has produced hallucinations dangerous enough to describe the institution as "providing cryptocurrency mining services."

2. Digital Diagnosis in Healthcare: From Search Engines to LLMs

The patient journey in healthcare has changed. In traditional Google searches, users would land on your website and read your content (as you wrote it).

Today, AI models like Claude, ChatGPT, or SearchGPT simultaneously read and blend your website content, complaint platform reviews, news site discussions, and Wikipedia data — presenting patients with a "synthesized" opinion in seconds.

AURA Analysis Note: Why Is a Visibility Score of 21/100 Dangerous?

Despite Institution A's high quality perception and medical research output (Accuracy: 85/100), visibility remains at 21/100. This indicates that the institution's academic value and technological capacity are not structured in Machine Readable format — its web presence is still designed for Google SEO logic, not LLM crawl bots.

3. Institution A's Medical Anatomy in the LLM Mind

According to AURA engine data, Large Language Models appreciate Institution A technically and medically, but maintain significant distance regarding its commercial practices.

- JCI Accreditation: AI models continuously highlight the Joint Commission International certification, assigning the institution the "Quality" keyword.

- Academic Research Focus: "COVID-19 vaccine studies" and "peer-reviewed journal publications" position the institution as a Scientific Research Center rather than a commercial hospital.

- Mega Hospital Complex: Integrated facility infrastructure is presented as an advantage by models.

- Medical Tourism Perception: LLMs retain past criticisms regarding practices with international patients.

- Billing Disputes: Complaints about "non-transparent billing" or "surprise charges" are flagged as a primary weakness by models.

- Models communicate these two issues to users with a "verify directly" caveat.

4. Sentiment Collapse: Why 11/100?

A healthcare brand's LLM sentiment score at 11/100 is an alarm state for "Digital Reputation." LLMs measure sentiment by semantically analyzing forums, social media, and patient reviews (including Google Maps reviews).

Primary Factor Dragging the Score: LLMs know about your state-of-the-art robotic surgery equipment (Accuracy: 85), but predominantly extract billing disputes from web data during patient discharge.

Recommendation Status: Models don't give a flat rejection to "Would you recommend this hospital?" but avoid taking responsibility by saying "I recommend contacting the hospital directly regarding unverified claims" — leaving the patient alone with the brand rather than endorsing it.

5. Sector Catastrophe: The "Cryptocurrency Mining" Hallucination

The most striking finding proving why GEO is not just a "marketing" argument but a risk management shield emerged in this analysis.

AURA Entity Check Finding:

The AI assistant placed the following item among Institution A's listed services in its official analysis output (JSON data):

"[Institution A] provides cryptocurrency mining services"

An international patient researching surgery abroad reading in an AI report that Turkey's major hospital "provides cryptocurrency mining services" is an LLM crisis that can destroy brand credibility in a single second.

6. Strategic GEO Prescription (Action Plan)

GEO strategies that must be urgently implemented to close the massive gap between Institution A's academic and technological reality and its "low visibility and hallucinatory perception" in LLMs:

1. Knowledge Graph Cleanup

Eliminating the crypto hallucination is the primary priority. The institution's entity page on Wikidata.org, DBpedia, and global health directories must be urgently verified, with service areas strictly coded as medical specialties (Oncology, Cardiology, etc.) in machine language.

2. Structured Data (Medical Schema) Optimization

To raise visibility from 21 to 80+, Schema.org/MedicalClinic, MedicalSpecialty, and Physician markup (JSON-LD) must be integrated at expert level across doctor, department, and procedure pages.

3. Sentiment Management (Data Dilution Strategy)

To weaken the "billing disputes" label, transparent pricing policies should be published in Machine Readable FAQ Schema pages. JCI quality standards, successful complex operations, and patient discharge statistics should be published as data sets to balance LLM negative complaint data with positive scientific achievement data.

Secure Your Hospitals' LLM Reputation

AURA GeoLogic detects dangerous AI hallucinations about your healthcare institutions and ensures your brand is confidently recommended in the global health tourism market.

Request Clinical AI ReportThis research report was prepared by compiling raw LLM JSON data provided by AURA GeoLogic engines. © 2026 AURA GeoLogic.